Beyond DeepSeek: Why Everyone Loses When We Race to Build AI

DeepSeek’s arrival reignites U.S.-China competition, raising fears of a destabilizing sprint for AI supremacy.

DeepSeek Shakes Silicon Valley

The January release of DeepSeek R1, a flagship Chinese AI model rivaling top U.S. models, sent shockwaves through Silicon Valley and beyond. The Chinese hedge fund High-Flyer released DeepSeek’s foundational model, V3, in December to little fanfare. But R1’s impact was immediate, triggering market chaos and policy debates.

The R1 model boasts high performance on reasoning tasks, matching and, in some benchmarks, outperforming top U.S. models like OpenAI’s o1 and Anthropic’s 3.5 Sonnet. Its web application distinguished itself with a “Deep Think” mode, which walks users through the model’s “chain of thought” before delivering an answer. But the feature that generated the most attention? The model’s cost.

DeepSeek claims R1 cost just $5.6 million to train—a fraction of the billions U.S AI labs have spent on their models. Just last month, OpenAI announced a $500 billion, government-backed investment in U.S. AI infrastructure.

Many AI leaders have cast doubt on DeepSeek’s cost estimate, arguing the figure may not account for salaries, research and development, data collection, and failed training runs.

“The $5M number is bogus,” said Oculus founder Palmer Luckey. “It is pushed by a Chinese hedge fund to slow investment in American AI startups, service their own shorts against American titans like Nvidia, and hide sanction evasion.”

Regardless of the number’s accuracy, R1's release has rippled through the economy, media, government, and tech sector.

U.S. stock markets saw a massive sell-off in AI-related stocks, wiping out nearly a trillion dollars in market value. Nvidia's stock value alone plunged by $600 billion in a single day.

Western media outlets sounded alarm bells, describing Meta engineers descending into “war rooms” and reporting on grave cybersecurity implications. U.S. lawmakers have called for a ban on DeepSeek and a reassessment of chip export policies.

AI industry leaders debated the significance of DeepSeek’s release on social media. Some have dismissed its achievements as unimpressive and overhyped. Others have heralded DeepSeek’s arrival as a widening of the game to researchers and developers outside the resource-rich Silicon Valley giants. Marc Andreessen, a leading tech investor, declared on X: “DeepSeek R1 is AI's Sputnik moment.”

America’s Strategy in the AI Arms Race? Export Controls

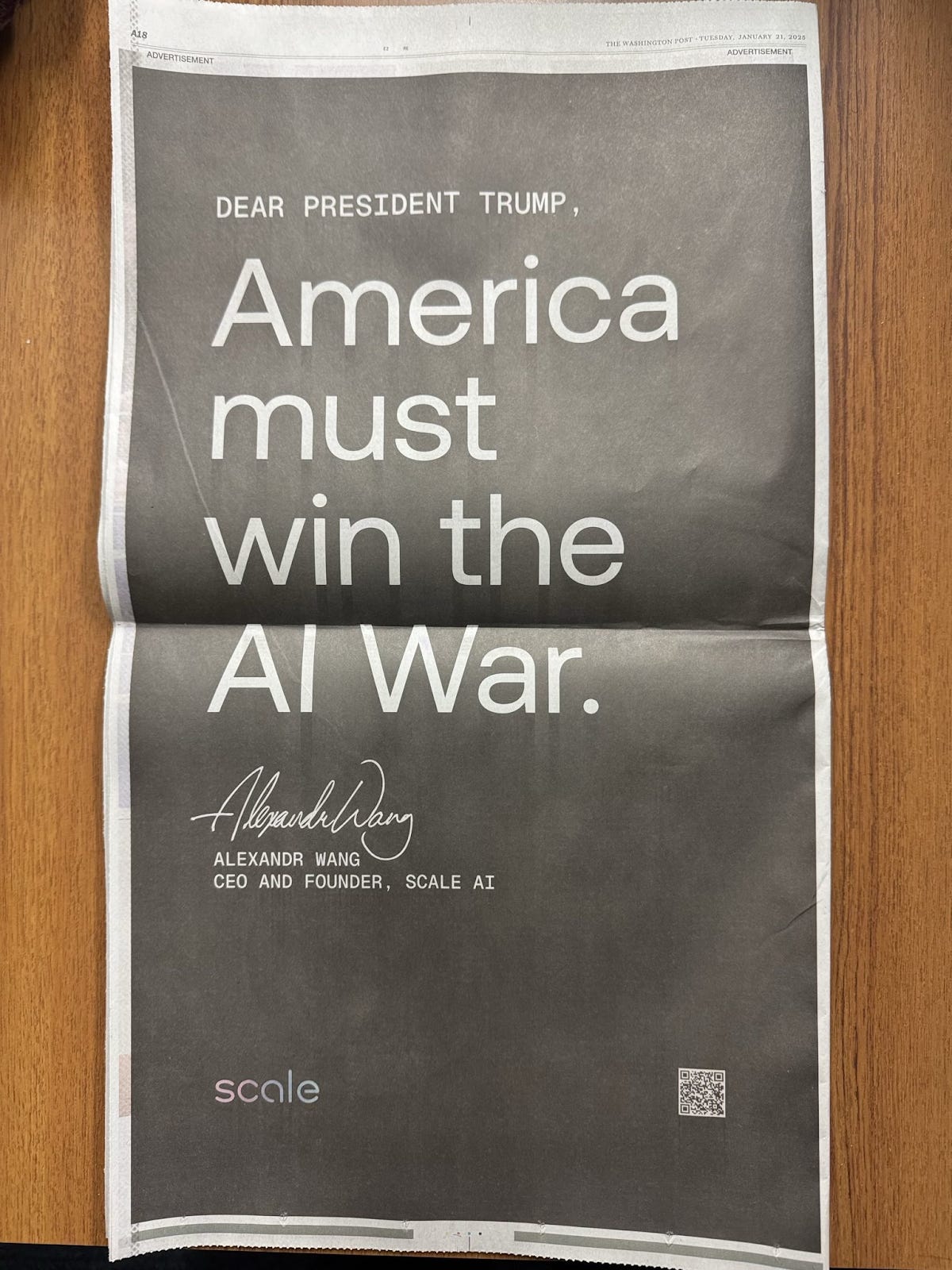

DeepSeek’s release has intensified what industry leaders have coined the "AI arms race". U.S. executives and policymakers cite R1 as proof that America must accelerate AI development to maintain dominance. All players are eager to be the first to unlock the next generation of superintelligent AI, known as artificial general intelligence (AGI).

U.S. policymakers argue that AI supremacy is critical for military and economic power. Anthropic CEO Dario Amodei, along with the RAND corporation, define the leading national strategy, the “Entente Strategy” as "a coalition of democracies seek[ing] to gain a clear advantage (even just a temporary one) on powerful AI by securing its supply chain, scaling quickly, and blocking or delaying adversaries' access to key resources like chips and semiconductor equipment.”

Strategic control over the AI supply chain is already in motion. In his last week in office, former U.S. President Joe Biden introduced the “Framework for Artificial Intelligence Diffusion,” reinforcing export controls on advanced chips to BRICS nations.

The Entente Strategy hinges on "scaling laws," a principle in AI development hypothesizing that increased computing power—namely through advanced chips and data centers—will directly accelerate AI capabilities. With sufficient computing investments, experts believe it's only a matter of time before AGI surpasses human capabilities. Some AI executives predict AGI's arrival as soon as 2026.

U.S. and China Race as AI Governance Hangs in the Balance

While the United States and China accelerate toward AGI supremacy, they overlook a more urgent race: one between rapid deployment and responsible governance.

In 2024, China introduced the “Artificial Intelligence Safety Risk Governance Framework,” a comprehensive set of guidelines aimed at mitigating AI risks and promoting safe development. While criticized for enforcing ideological control, the framework marks a proactive approach to AI oversight that the U.S. has yet to match.

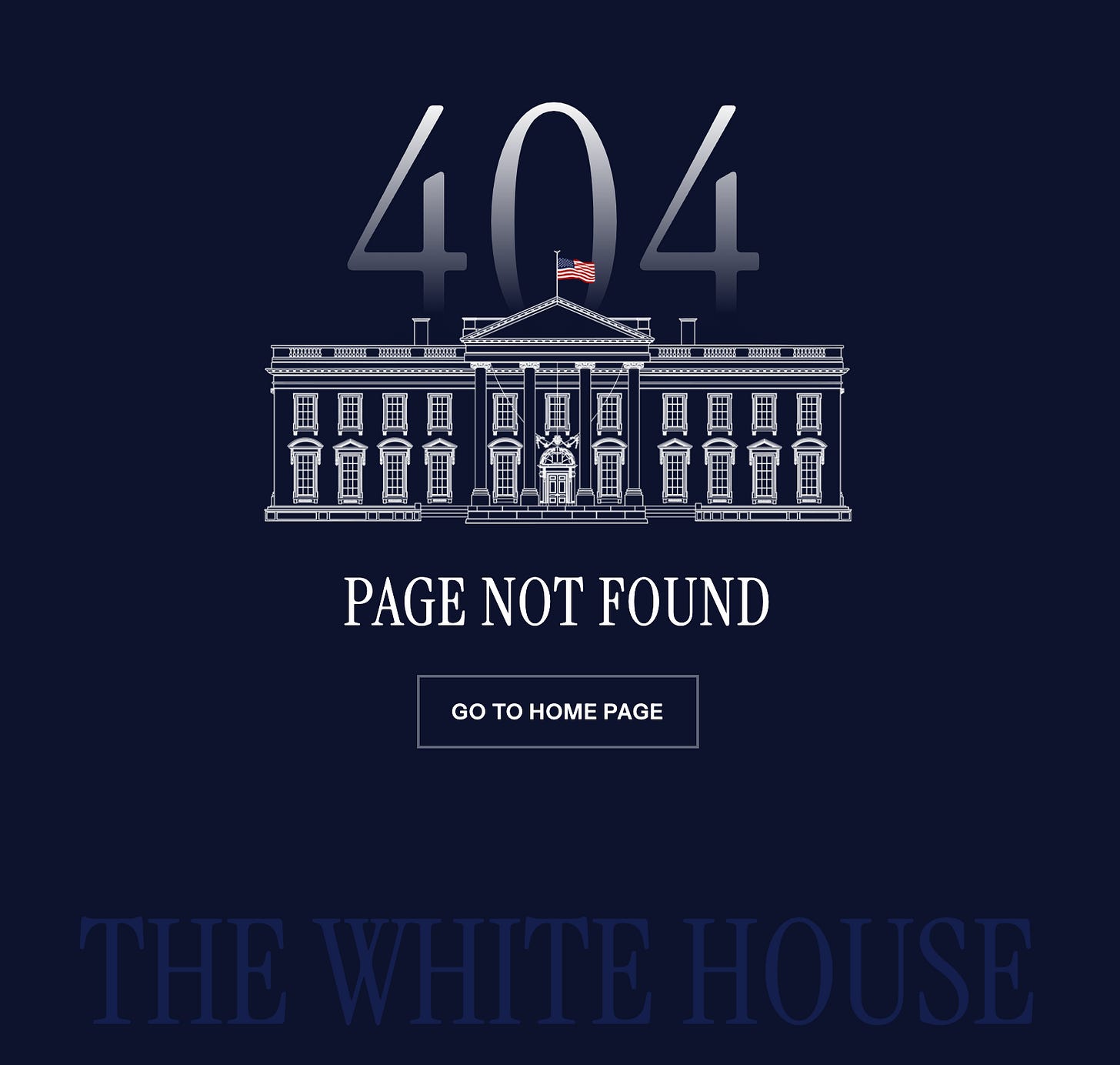

By contrast, the U.S. lacks a unified, federal policy regulating AI. On his first day in office, President Donald Trump repealed Biden's AI safety policy, Executive Order 14110 on the "Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence.”

Despite its own laissez-faire approach to AI governance, U.S. intelligence agencies warn that Chinese dominance in AI presents catastrophic risks, from military escalation and cyber threats to embedded ideological biases.

DeepSeek has already exposed some of these risks. Security researchers found that DeepSeek failed all standard safety assessments, with jailbreaking attempts achieving a shocking “100 percent attack success rate.” Users have reported that DeepSeek’s models refuse questions about Tiananmen Square or criticism of Chinese President Xi Jinping. Journalists have exposed the Chinese government's prolific use of AI-enabled surveillance technology to monitor and target ethnic minorities, including Uyghur Muslims.

Edward Geist, a policy researcher at the RAND corporation, warns that “Artificial intelligence and other emerging technologies are already central to military competition. China has used AI for cyber operations against the United States and our allies, as well as for modernizing its military systems. And although there are peaceful uses for AI, much like nuclear technology, the risks of misuse could be severe.”

Yet, mainstream criticism of China’s AI development often obscures the U.S.'s own history of and potential for AI misuse. High-profile cases have exposed U.S. police agencies for erroneously arresting Black individuals based on false AI facial recognition matches. In the 2024 election, AI-generated robocalls mimicking President Biden's voice discouraged people from voting—a stark example of how unregulated AI tools are already being weaponized for political manipulation.

Meanwhile, the growing concentration of AI power among a handful of tech billionaires has deepened concerns about U.S. regulation. Silicon Valley elites—including Elon Musk, Mark Zuckerberg, and Sam Altman—have sought to ally themselves with the Trump administration. Their presence at the presidential inauguration, alongside massive donations and legal settlements to win over the administration, has raised concerns about their oligarchic political influence.

Western policymakers and AI executives have used the DeepSeek panic to amplify fears of China’s AI dominance. While concerns about China’s AI advances are not unfounded, a greater threat lies in the consensus to accelerate AI development with diminished regard for governance. This reactionary stance fuels an arms race that prioritizes power over precaution. The real race is not which nation builds the most powerful AI first; it’s whether humanity recognizes that sacrificing safety for speed is a losing game.

OpenAI’s Safety Exodus Signals an Industry in Crisis

The perception of an AI arms race isn't just reshaping geopolitics—it's already compromising safety at the industry's leading labs.

Raluca Csernatoni, a researcher at the Carnegie Endowment for International Peace warns that “the pressure to outpace public and private sector adversaries by rapidly pushing the frontiers of a technology that is still not fully understood or controlled, and without commensurate efforts to make AI safe for humans, may well present insurmountable challenges and risks.”

These risks aren't hypothetical. OpenAI, once a nonprofit dedicated to AI safety, has transformed into a private company, valued at $300 billion, with many arguing it prioritizes profit over responsible development.

Internal sources revealed that OpenAI rushed safety testing for GPT-4o, allocating less than a week for the safety checks before launch—a timeline that alarmed many researchers.

The pressure to accelerate development has triggered an exodus of nearly half of OpenAI’s safety staff. Jan Leike and Daniel Kokotajlo both resigned from OpenAI over concerns that the company has not done enough to prevent its AI systems from becoming dangerous.

“The world isn’t ready, and we aren’t ready,” Kokotajlo wrote. “And I’m concerned we are rushing forward regardless and rationalizing our actions.”

This erosion of safety protocols is particularly troubling given the stakes. Expert estimates place the likelihood of catastrophic outcomes from advanced AI between 5% and 95%.

Meanwhile, the policy realm lags even further behind. Despite a March 2023 open letter from tech leaders—including Tesla CEO Elon Musk and AI pioneer Yoshua Bengio—calling for a pause in advanced AI development, the race continues to accelerate. Without adequate governance frameworks, we risk unleashing technologies whose consequences we can neither predict nor control.

Can the World Move Beyond the AI Arms Race?

DeepSeek's emergence presents a unique opportunity to rethink the AI race entirely.

AI progress thus far has been concentrated in the hands of a few Silicon Valley giants. DeepSeek’s success challenges this monopoly, where billion-dollar compute budgets determine the pace of innovation, hinting at a future where open-source models and lowered computing costs could diversify AI research and safety efforts.

But the bigger question isn’t who builds the most powerful AI; it’s how we prepare for the intelligence already emerging. As Ethan Mollick, a professor at the Wharton School and one of TIME's Most Influential People in Artificial Intelligence, says:

“What concerns me most isn’t whether the labs are right about their timelines. It’s that we’re not adequately preparing for what even current levels of AI can do. These aren't questions that AI developers alone can or should answer—they demand attention from organizational leaders, employees whose work lives may transform, and stakeholders whose futures may depend on these decisions."

AI governance today is a fragmented mess. “Instead of a cohesive global regulatory approach,” says Csernatoni, “what has emerged is a mosaic of national policies, multilateral agreements, high-level and stakeholder-driven summits, declarations, frameworks, and voluntary commitments.”

Moving forward, the fundamental challenge will be whether nations and communities can align on governance that mitigates the risks of AI.

“Given the cultural divides, differing value judgments, and geopolitical competition, it is uncertain whether such a unified framework is achievable,” Csernatoni acknowledges. And yet, unprecedented global cooperation (in the vein of the International Atomic Energy Agency or U.S.-China Clean Energy Cooperation) may be critical to usher in a safe AI future.

If left unchecked, the AI arms race could become a self-fulfilling prophecy, pushing development forward recklessly while sidelining safety. But DeepSeek’s rise offers a chance to shift focus. For the real race isn't between nations—it's between responsible innovation and the consequences of unchecked ambition.

As Mollick puts it, "The flood of intelligence that may be coming isn't inherently good or bad—but how we prepare for it, how we adapt to it, and most importantly, how we choose to use it, will determine whether it becomes a force for progress or disruption."

DeepSeek isn't China's "Sputnik moment." It's a wake-up call for the entire world to reconsider what progress in AI really means—and what we risk losing in the race to claim victory.